I developed this project together with my colleague Simon Maris during our joint research at XLab @ University of art and design Burg Giebichenstein (2020-2022). Find out more about the XLab below.

This creative technology project is from the early days of (diffusion-based) text-to-image generators: It was August 2022 and Stable Diffusion just came out.

Our research question

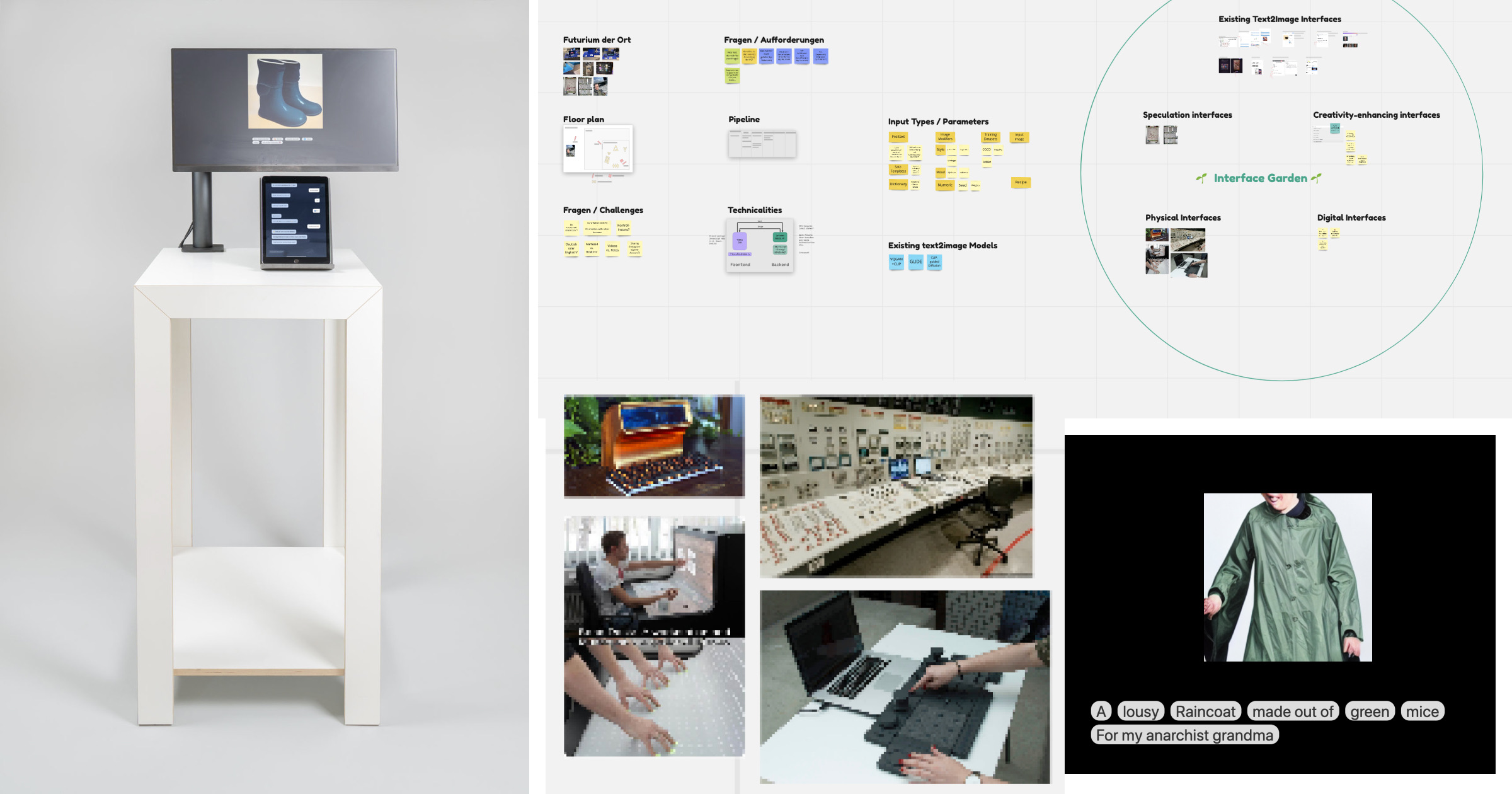

Our research question was: Which alternative (speculative) user interfaces can we imagine for text-to-image generation? Which interfaces can stimulate or hinder human creativity? Which interfaces support “human-AI co-creation” (a term we were quite obsessed with at the time).

The prototype

Our proposed solution was to frame the prompt construction process as a conversational experience (aka a chatbot), taking the shape of a physical installation with a touch screen.

In our system the prompt is constructed from individual words and sentence fragments, which are playfully queried via a conversation with a chatbot.

Why not offering a free text input instead? We hypothesized that it creates constraints that are desirable in some situations e.g. to stay within a specific (creative) domain and avoid getting off topic.

Building up the prompt iteratively can lower the barrier for the user to be inventive „on the spot“.

Post-processing let us allow to do some more prompt engineering in the backend to achieve a coherent visual aesthetic.

Exhibition at Futurium Berlin

This installation was exhibited at the Futurium Berlin as part of the exhibition “Burglabs present” in summer 2022.

Here’s a video from 2022 about the project (it’s in German, but you can read the transcript below). Video made by Rosenpictures Filmproduktion.

Video transcript

Alexa: I’m Alexa Steinbrück, I’m an artist and software developer and I work as a scientific associate at XLab.

Simon: I’m Simon Maris, architect and now scientific associate at XLab for robotics and AI.

Alexa: In recent years, there has been an explosion of generative machine learning models and we are gradually integrating them into our artistic processes.

Simon: One example of this is what are known as text-to-image models.

Alexa: Text-to-image algorithms are, as the name suggests, systems that can translate any input text into a photorealistic image. This input text is also called a prompt.

Alexa: Our research question was: What could interfaces for these text-to-image systems look like that support the creative process?

Alexa: We built an initial prototype, which we call the ‘Verboscope’. It is a conversation-based interface – a chatbot so to speak – that guides the user through the process of creating prompts.

Alexa: We are clearly at the beginning of a journey and want to explore further how this tool can specifically support designers and artists in their process. This means, for example, how does the dialogue flow needs to be adapted so that it can be used by a graphic designer who wants to design a film poster.

Technicalities

The app is written with Svelte (SvelteKit).

For the exhibition we run the Stable Diffusion model offline and locally on a stand-alone PC using a tool called “cog”.

About XLab @ Burg Giebichenstein

-

From 2020-2022 I was co-lead of the first lab for AI at a German art school, the university of art and design Burg Giebichenstein. Read more about my work at the lab here: https://alexasteinbruck.medium.com/learnings-after-2-5-years-of-running-an-ai-lab-at-an-art-university-xlab-c4fbd5179ed8

-

Our podcast “Towards co-creation” where Simon & I talk with artists and designers on their use of AI technologies (2020-2022): https://open.spotify.com/show/3m3R68eu4hwM8xI3VDUCGJ

Image credit: Futurium by By Matthias Süßen - Own work, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=111353444